Posts Tagged Part 1

The Wheels on the Technology Bus Go Round and Round

Emerging Technologies, Innovations & Trends, Part 1

We started roughly 11 weeks ago with the topic of digital disruptions due to the fast pace of innovation and technology adoption. As we slowly are coming to the end up the semester, the last topic for discussion is Emerging Technologies, Innovations & Trends. Innovations bring about change, which in turn brings about disruption. We have gone full-circle and are back at the beginning…

We talked about the Fast Pace of Technology Adoption, the meaning of Disruptive Technology, and discussed How People Adopt Technology Innovations, but we haven’t really focused on the actual nature of the innovations and trends we are currently facing.

To explore some of those innovations and trends in depth, lets review some of the material of author Brian Solis, a well-known author on the subject of Digital Disruptions. On his website, Solis refers to himself as a “digital analyst, anthropologist and futurist.” In his own words:

I study disruptive technology, specifically innovative technology that gains so much momentum that it disrupts markets and ultimately businesses. In the past several years, disruptive technology has become so pervasive that I’ve had to further focus my work on studying only disruptive technologies that are impacting customer and employee behavior, expectations and values and affecting customer and employee experiences. I can hardly keep up with today let alone consider the potential disruption that looms ahead in every sector imaginable including new areas that will emerge and displace laggard perspectives, models and processes.

Starting back in 2009, Solis started creating the below graphic which he calls the “Wheel of Disruption.” Every few years he would update it to add new trends or technologies. The below image is his 2015 version. The main intent of the graphic is to show the “Golden Triangle” of real-time, mobile and social, surrounded by the cloud, which as Solis says is “inspiring incredible innovation and thus producing new and disruptive apps, tools and services.”

Image Source: Brian Solis

In Part 2, We will look in more detail and some of the predictions that Solis has made regarding upcoming technology and trends.

Fat Bottomed Strategies make the Enterprise Go Round

The Enterprise Business Architecture, Part 1

Image Source: Aviana Technologies

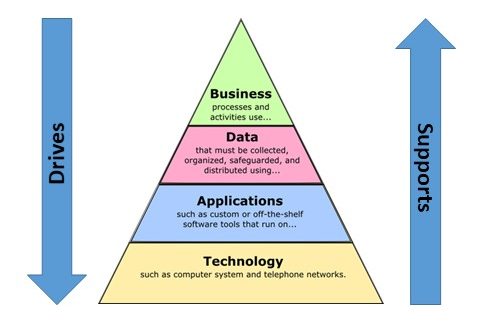

In previous classes as well as the beginning of this class, we discussed how Enterprise Architecture (EA) is traditionally thought of as having 4 distinct viewpoints:

- Business Architecture Layer

- Data Architecture Layer

- Application Architecture Layer

- Technology Architecture Layer.

In recent years, the importance of security has become a much higher priority and many organizations now treat the Security Architecture as a fifth distinct viewpoint within the EA. However it is important to note that there is a distinct flow to the parts, as noted by the wording in the above graphic. From the top down, the layers define the scope and boundaries of the layer below them. From the bottom up, each layer supports the architecture of the layer above it.

This week, we are talking about the Enterprise Business Architecture (EBA), the keystone of our EA “pyramid” diagram. And as the diagram suggests, the business layer drives all the rest of the viewpoints of the Enterprise Architecture while those other viewpoints are ultimately supporting the business architecture.

Gartner defines the EBA as:

That part of the enterprise architecture process that describes — through a set of requirements, principles and models — the future state, current state and guidance necessary to flexibly evolve and optimize business dimensions (people, process, financial resources and organization) to achieve effective enterprise change.

As with information, technology and solution architectures, the EBA process translates the strategy, requirements and vision as defined in the business context into contextual-, conceptual-, logical- and implementation-level requirements. Using this process, EBA, as well as all the EA viewpoints, should demonstrate clear traceability of architectural decisions to the elements of the business strategy.

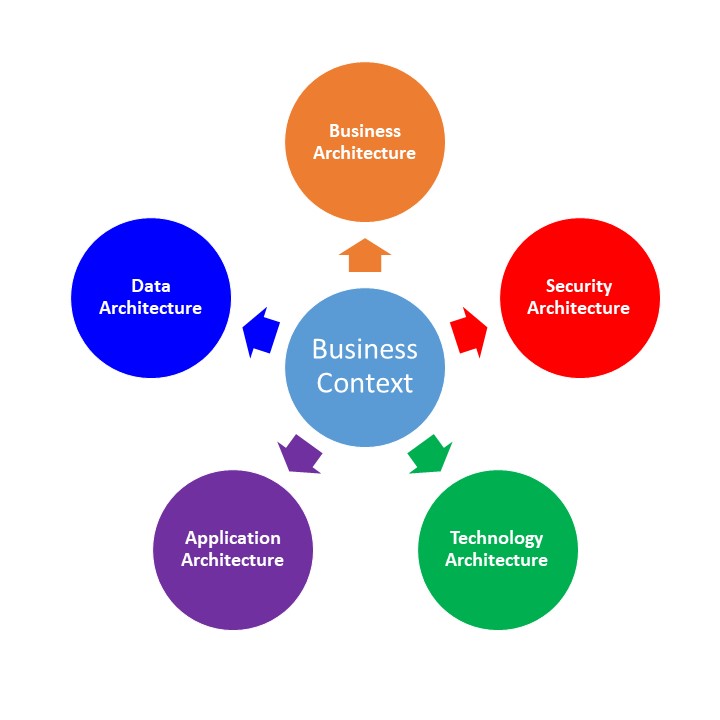

Gartner continues in the article, discussing one of the main issues regarding this concept of Business Architect, in that it is often confused with Business Context. Business Context is defined in the article as the “process of articulating the business strategy and requirements and ensuring that the enterprise architecture effort is business-driven.” In other words, the business context defines directly how the EA is related to the business strategy and how they interact with each other. It gives us a perspective on how the business operates. Back in EA872, I wrote about how the “context provides the enterprise architects with the proper frame of reference in which to link together the EA Principles, Business Strategies and Business Initiatives.” The Business Context provides us with that frame of reference for all aspects of EA, including the Business Architecture.

So the Business Context provides the frame of reference, while the Business Architecture is the organization and structure of the business processes, organizational elements, business strategy and requirements, and business rules. Simply put, the Business Context defines the “Why” while the Business Architecture defines the “What” and “How”. This is true of each of the 4 other EA layers we have been reviewing in the past weeks. Each of those layers gives us a snapshot view into the “What” and “How” of their respective fields within the business. The Business Context not only provides the “Why” for each of the EA layers, but more importantly, it provides a method to understand how the 5 layers work together to fulfill the overall business strategy.

Security, Code Red!

The Enterprise Security Architecture, Part 1

In a recent post, I talked specifically about the security challenges faced in the Big Data field. Of the many examples referenced, the one most recently on everyone’s mind is probably the Equifax hack involving the Social Security numbers, birth dates, addresses and more of at least 143 million people. It was a staggering amount of critical data stolen in the security breach. Additionally, over the weeks that followed, more details were discovered regarding the breach which are mind-boggling.

- The original security breach was on March 10th, 4 days after the a security flaw in the Apache web server applications was discovered and published along with a fix.

- Equifax discovered the breach on July 29th and took some of the affected systems offline for up to 11 days to resolve the issues.

- On August 1st & 2nd, 3 top executives from Equifax sell off nearly $2 million dollars worth of company stock.

- Finally, on September 7th, Equifax publicly announced about the security breach.

Since that time, numerous executives at Equifax have either left or been let go, and the investigation has shown similarities to other cyber-attacks that were carried out by Chinese hackers, but nothing conclusive has been publicly announced as of this time (Bloomberg Businessweek).

There is a lot of ambiguity regarding the timeline of the initial breach, considering that Equifax itself originally reported that they were hacked in mid May. However, from a security perspective, the most critical issue is why did it take 4 months before Equifax was aware that there had been a security breach at all. The organization was supposed to have sophisticated cyber-security policies and tools in place. Additionally, the public is most angry about why the breach wasn’t disclosed until a full month after it was discovered!

So how does an organization protect itself in today’s security challenged environment? There are the obvious steps that an individual or organization can take such as virus protection, malware protection, keeping up to date on security patches, limiting access to critical systems, etc. But haphazard methods can no longer keep up with the rate at which hackers break through security measures and efforts. There needs to be a plan. For organizations, this can be referred to as the Security Architecture. Thorn, Christen, Gruber, Portman & Ruf (2008) defined Security Architecture as:

A Security Architecture is a cohesive security design, which addresses the requirements (e.g. authentication, authorization, etc.) – and in particular the risks of a particular environment/scenario, and specifies what security controls are to be applied where. The design process should be reproducible.

Not only does there need to be specific actions taken to ensure the security of the organizations systems and data, but there needs to be a cohesive security design that governs how security is managed and controlled. Once the organization has an Enterprise Architecture established, this should be even more comprehensive.

An enterprise security architecture needs to address applications, infrastructure, processes, as well as security management and operations. (Thorn, et. al., 2008)

As in other aspects of Enterprise Architecture, a Security Architecture brings a measure of standardization which simplifies governance of the security as well as potentially brings cost savings to the organization. Security resources can be deployed across the enterprise to minimize potential risks. Of course, if keeping your security measures up to date is not part of the Security Architecture, then like Equifax, there will be harsh consequences to be faced.

References:

Thorn, A., Christen, T., Gruber, B., Portman, R. & Ruf, L. (2008). What is a Security Architecture. Information Security Society Switzerland.

Let’s Talk About Tech, Baby…

The Enterprise Technology Architecture, Part 1

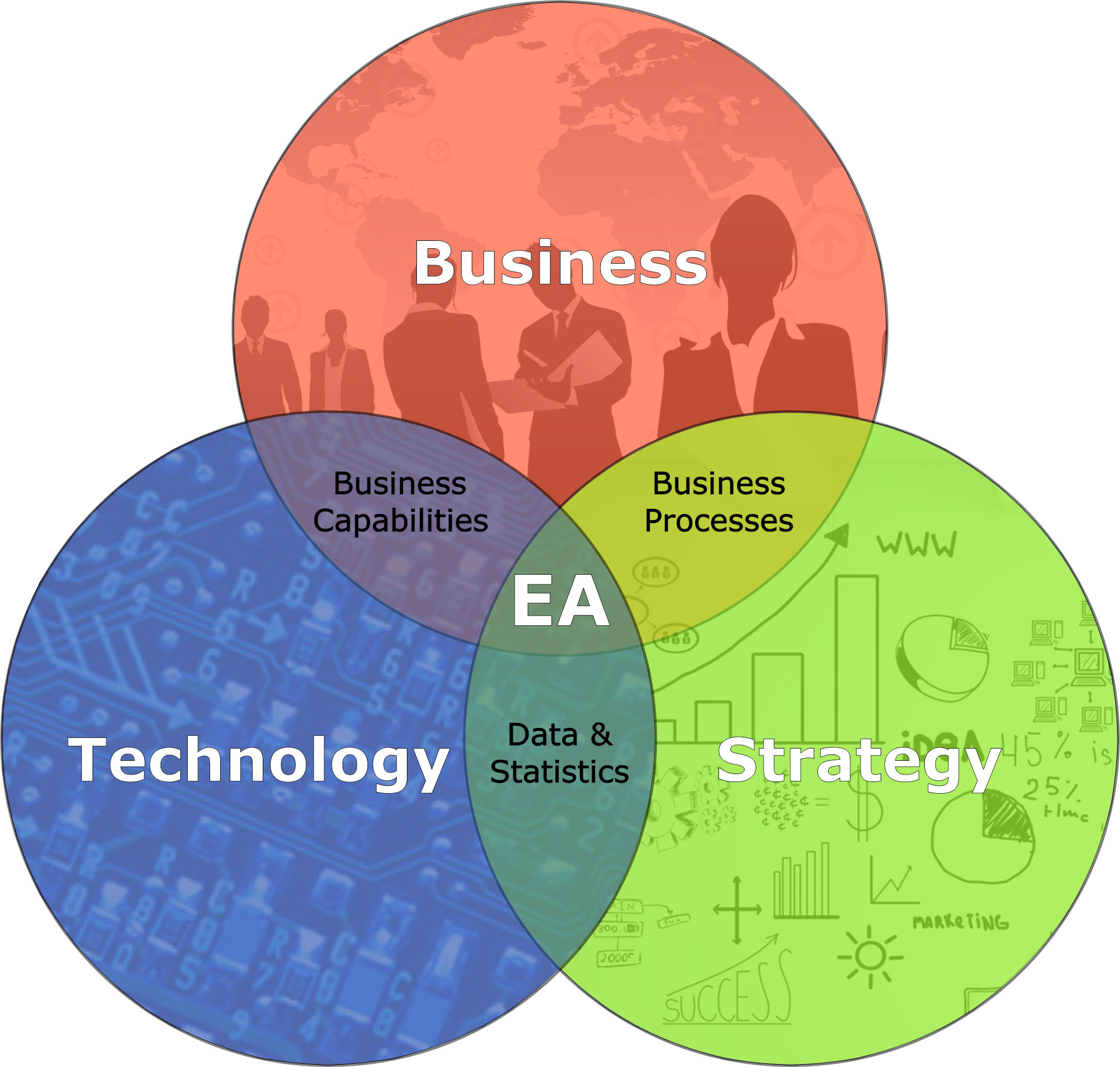

Back in EA871, we were introduced to the concept of Enterprise Architecture and how it holistically links strategy, business and technology together. I’ve frequently described the Masters program to my friends and associates as the combination of IT, an MBA program and Project Management.

EA has been defined by various technology organizations as:

- Gartner – Enterprise architecture is a discipline for proactively and holistically leading enterprise responses to disruptive forces by identifying and analyzing the execution of change toward desired business vision and outcomes. EA delivers value by presenting business and IT leaders with signature-ready recommendations for adjusting policies and projects to achieve target business outcomes that capitalize on relevant business disruptions. (http://www.gartner.com/it-glossary/enterprise-architecture-ea/)

- MIT Center for Information Systems Research – The organizing logic for business processes and IT infrastructure reflecting the integration and standardization requirements of the firm’s operating model. (http://cisr.mit.edu/research/research-overview/classic-topics/enterprise-architecture/)

- Microsoft – An enterprise architecture is a conceptual tool that assists organizations with the understanding of their own structure and the way they work. It provides a map of the enterprise and is a route planner for business and technology change. (https://msdn.microsoft.com/en-us/library/ms978007.aspx)

For me, the easiest way to describe EA is the intersection of Business, Strategy and Technology:

We’ve talked about various aspects of the strategies of EA, but now let’s talk about the Technology. What do we actually mean by technology architecture? Michael Platt, senior engineer at Microsoft defined it within the framework of Enterprise Architecture:

A technology architecture is the architecture of the hardware and software infrastructure that supports the organization and implements the operational (or non functional) requirements, particularly the application and information architectures of the organization. It describes the structure and inter-relationships of the technologies used, and how those technologies support the operational requirements of the organization.

A good technology architecture can provide security, availability, and reliability, and can support a variety of other operational requirements, but if the application is not designed to take advantage of the characteristics of the technology architecture, it can still perform poorly or be difficult to deploy and operate. Similarly, a well-designed application structure that matches business process requirements precisely—and has been constructed from reusable software components using the latest technology—may map poorly to an actual technology configuration, with servers inappropriately configured to support the application components and network hardware settings unable to support information flow. This shows that there is a relationship between the application architecture and the technology architecture: a good technology architecture is built to support the specific applications vital to the organization; a good application architecture leverages the technology architecture to deliver consistent performance across operational requirements. (Platt, 2002)

In other words, it’s not the hardware or software themselves, but the underlying system framework. In the same way that EA provides standards and guidelines for technology in business, the Enterprise Technology Architecture (ETA) focuses on standards and guidelines for the specific technologies used within the enterprise. Gartner defines ETA as “the enterprise technology architecture (ETA) viewpoint defines reusable standards, guidelines, individual parts and configurations that are technology-related (technical domains). ETA defines how these should be reused to provide infrastructure services via technical domains.”

So ETA is a formalized set of hardware & software, which meets various business objectives and goals, and includes the various standards and guidelines that govern the acquisition, preparation and use of said hardware and software. These can be servers, middle-ware, products, services, procedures and even policies. To be clear, ETA is not the same as System Architecture. System Architecture deals with specific applications and data, along with the business processes which they support. ETA is the technological framework which supports and enables those application and data services.

References:

Platt, M. (2002). Microsoft Architecture Overview. Retrieved from https://msdn.microsoft.com/en-us/library/ms978007.aspx

Come on… Big Data! Big Data!

The Enterprise Data Architecture, Part 1

With the explosive growth of the Internet and more recently, the Internet of Things (the rise of the machines…!!!), there has been a corresponding explosive growth in the amount of data available and collected from all those connected devices and software. However, along with the vast volume of data that has been generated, there has been an even greater challenge in dealing with the data. As early as 2001, various IT analysts started reporting on potential issues that would arise due to the mass amounts of data being generated. Most notably, this article by Gartner analyst, Doug Laney, summarized the various issues attached to Big Data (as it’s commonly called) into whats known as the 3Vs:

- Data Volume – The depth/breadth of data available

- Data Velocity – The speed at which new data is created

- Data Variety – The increasing variety of formats of the data

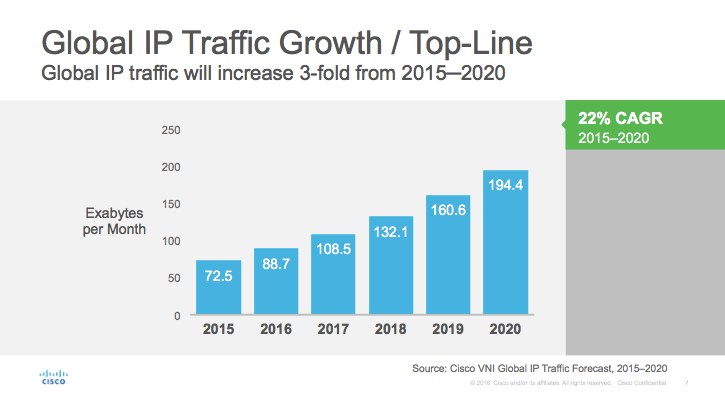

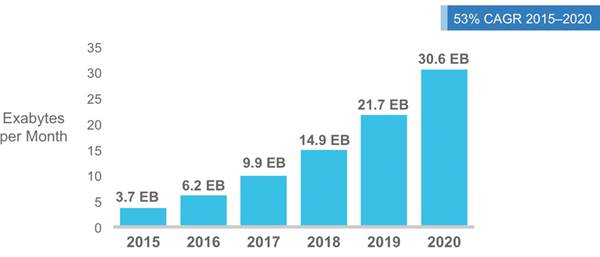

Now, 16 years after that article was written, we still face many of the same challenges. Even though technology has improved to be able to handle larger amounts of data processing and analytics, the amount of source data has continued growing at exponential rates. Cisco releases each year a Visual Networking Index which shows previous years data as well as predictions regarding the next few years as well. In their 2016 report (Cisco, 2016), the prediction was that by 2020, there would be over 8 billion handheld/personal mobile ready devices as well as over 3 billion M2M devices (Other connected devices such as GPS, Asset Tracking Systems, Device Sensors, Medical applications, etc.) in use, consuming a combined 30 million terabytes of mobile data traffic per month. And that is just mobile data.

So what are the real challenges being faced due to this exponential growth of data? Here are some facts to consider as posted by Waterford Technologies:

- According to estimates, the volume of business data worldwide, across all companies, doubles every 1.2 years.

- Poor data can cost businesses 20%–35% of their operating revenue.

- Bad data or poor data quality costs US businesses $600 billion annually.

- According to execs, the influx of data is putting a strain on IT infrastructure. 55 percent of respondents reporting a slowdown of IT systems and 47 percent citing data security problems, according to a global survey from Avanade.

- In that same survey, by a small but noticeable margin, executives at small companies (fewer than 1,000 employees) are nearly 10 percent more likely to view data as a strategic differentiator than their counterparts at large enterprises.

- Three-quarters of decision-makers (76 per cent) surveyed anticipate significant impacts in the domain of storage systems as a result of the “Big Data” phenomenon.

- A quarter of decision-makers surveyed predict that data volumes in their companies will rise by more than 60 per cent by the end of 2014, with the average of all respondents anticipating a growth of no less than 42 per cent.

- 40% projected growth in global data generated per year vs. 5% growth in global IT spending.

Datamation put together this list if Big Data Challenges of which I want to highlight a few a specifically.

- Dealing with Data Growth – As already mentioned above, the amount of data is growing year over year. So a solution that may work today, may not work well tomorrow. Investigating and investing in the technologies that can grow together with the data is critical.

- Generating Insights in a Timely Manner – Generating and collecting mass amounts of data is a specific challenge that needs to be faced. But more importantly, what do we do with all that data? If the data being generated is not being analysed and used to benefit the organization, then the effort is being wasted. New tools to analyze data are being created and released annually and these need to be evaluated to see if there are organizational benefits to be gained.

- Validating Data – Again, if your concern is generating and collecting mass amounts of data, then just as important as processing and analyzing the data, it is important to verify the integrity of the data. This is especially important in the quickly expanding field of medical records and health data.

- Securing Big Data – Additionally, the security of the data is a rapidly growing concern. As seen in recent data hacks such as the Equifax hacking attacks, the sophistication of hacking and phishing is growing at a rate equivalent to the volume of big data itself. If the sources of data are not able to provide adequate security measures, the integrity of the data can be questioned as well as many other issues.

Big Data is here to stay, and it’s only going to get bigger. Is your company ready for it?

References:

Cisco (2016). Cisco Visual Networking Index. Retrieved from http://www.cisco.com/c/en/us/solutions/service-provider/visual-networking-index-vni/index.html